New research from the Rithm Project surveyed 2,383 teens and young adults to understand how AI is shaping their relationships. While most use AI for information and tasks, a notable group are turning to AI characters for emotional support, with over half of this group reporting they feel they have no one to turn to. These findings offer an important window into how some teens are really using AI, and why parents need to be having these conversations.

Hi everyone. I recommend pairing this blog with an encore of one of my favorite podcast episodes, called Kids Using AI Chatbots: The Risks Parents Can’t Ignore, so do check that out.

I talk with many teens and parents and often ask whether they have had conversations about AI together, and most say “no.” I wrote this blog to help spark these important conversations.

The Rithm Project, whose mission is “building human connection in the age of AI,” recently released data from a survey of 2,383, 13 to 24-year-olds.

To better understand why teens do or don't use AI, how their current relationships affect their choice of use, and finally, how their use or non-use impacts their relationships.

As this survey captures data at a single point in time, only correlation can be inferred, not causation. A different design would be needed to tease out causation. (Could your child or students come up with a study design that would achieve causation?)

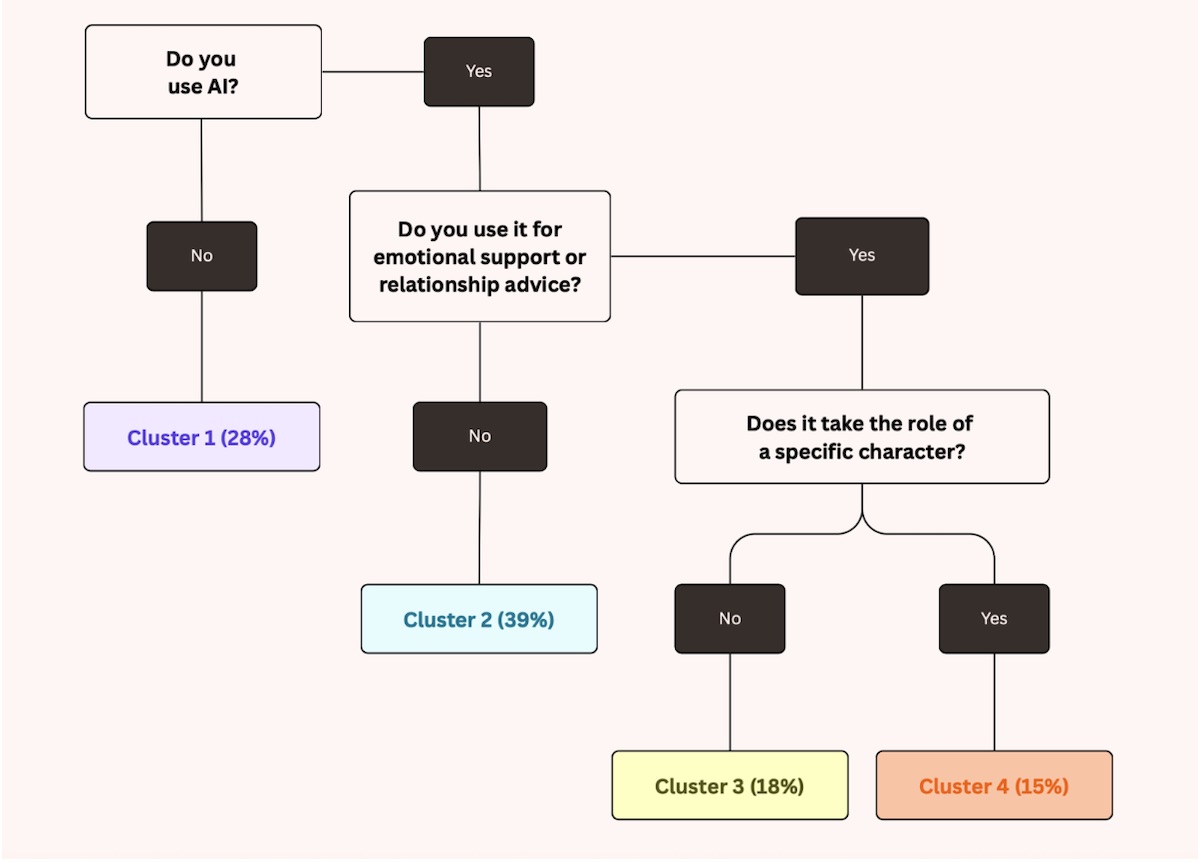

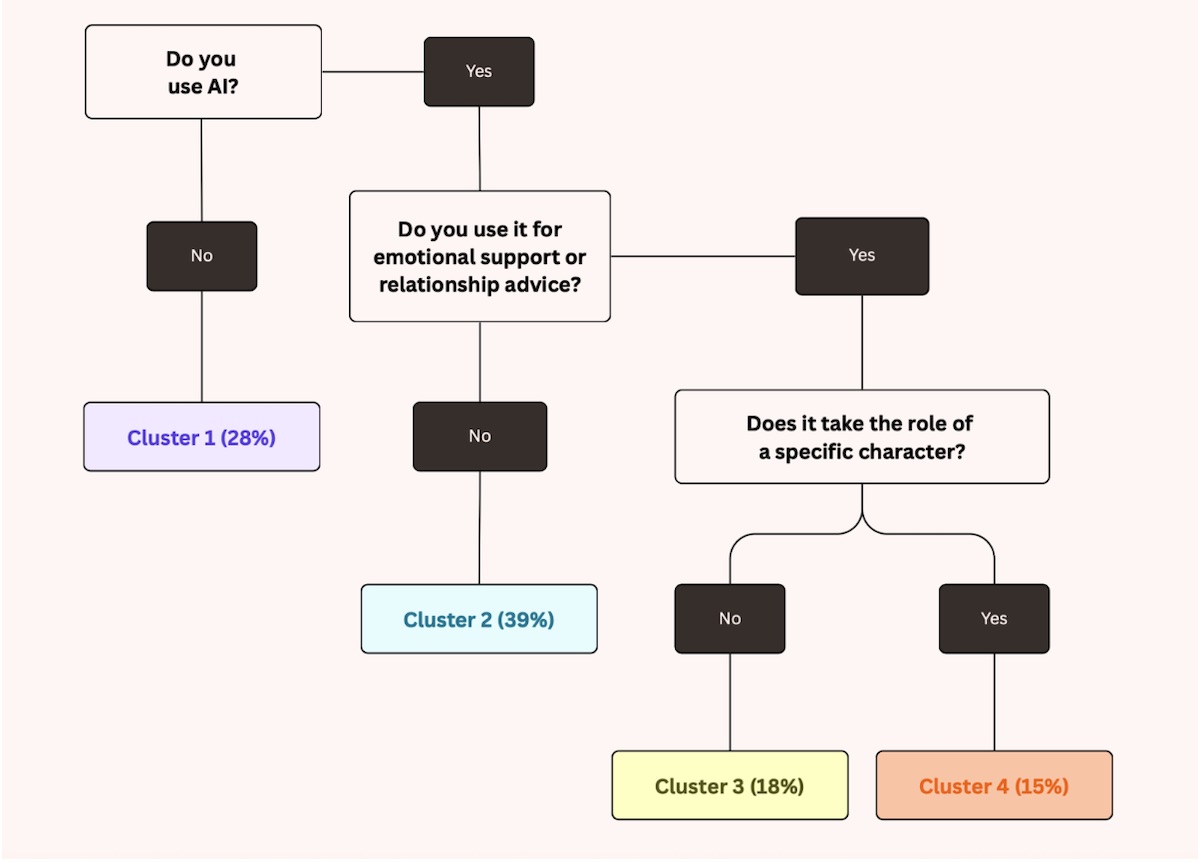

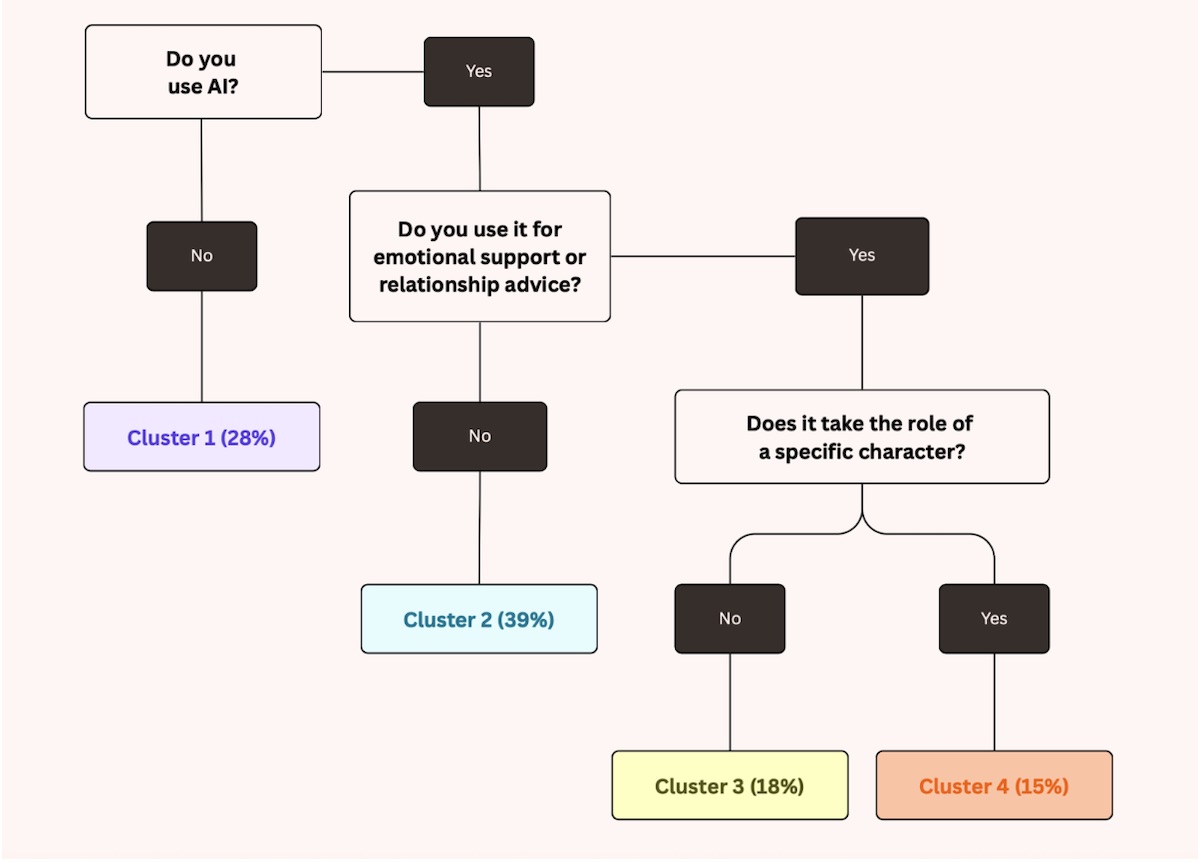

Then, of the remaining that said “yes, they use it,” the researchers then put them into other clusters:

Clusters 3 and 4 surely also use AI for other things, but given that the study is primarily examining the impact on relationships, these clusters were created accordingly.

The researchers found that the term "AI companion" carries real stigma with teens, who dismissed synthetic relationships as "weird and embarrassing."

Instead, "AI character" resonated much more. It avoids the shame associated with "companion" and better captures the range of ways young people actually engage with personified AI, from chatting with celebrity personas to getting advice from a virtual professional.

Through the survey questions, the researchers divided AI users into three groups: The Bestie, The Gamer, and The Expert Seeker.

Learn more about showing our movies in your school or community!

Join Screenagers filmmaker Delaney Ruston MD for our latest Podcast

Learn more about our Screen-Free Sleep campaign at the website!

Our movie made for parents and educators of younger kids

Learn more about showing our movies in your school or community!

Register your interest in bringing our new movie to your school or community

The report is chock-full of findings, but I’m only focusing on a few related to Clusters 1 and 4.

As mentioned, they found that 28% of all respondents reported that they use AI rarely or not at all.

Through other questions on the survey the researchers divided this cluster into two groups: “conscious objectors” and “AI non-participants”.

For many months, I have been talking with young people aged 16 to 24, who are very concerned about AI and rarely use it, citing environmental concerns very frequently, in particular all the water used by the data centers.

The hit to the environment is real. I have been surprised that teens cite water issues far more often than energy concerns. I bet that they will start talking more about that with time.

For the AI non-participants: They often cite things like "AI gets things wrong" or "it will make me stupid" as reasons for not using it.

It was found that 15% of respondents who say they use AI, said that they use AI characters. (Cluster 4)

They found that these respondents answered yes to the following statement significantly more often than people in the other clusters; “turn to AI more than people sometimes or more.”

The point here is that if we know kids and teens who are using AI characters, we need to do some calm questioning about things like, are they turning to the characters with personal questions? What other adults besides yourself do they feel they can turn to?

Another concerning finding in this group is that 51% of them feel like they have no one to turn to. Again this is the highest among any cluster and warrants attention.

I've talked often about the idea of a "vulnerable village," and these AI times are calling for it more than ever. Many teens are not going to tell their parents they are using AI characters. If we hear of a teen who is, we should find thoughtful, safe, and respectful ways to address it together.

If you hear from your teen that another teen is using AI characters in ways that concern you, one place to start is talking with other parents about how you might approach the situation together, whether that means reaching out to the teen, their parents, or both.

This is not easy, but our tech revolution warrants a family revolution. We have to stop making family life=private life. We need each other!

Learn more about showing our movies in your school or community!

Join Screenagers filmmaker Delaney Ruston MD for our latest Podcast

Learn more about our Screen-Free Sleep campaign at the website!

Our movie made for parents and educators of younger kids

Join Screenagers filmmaker Delaney Ruston MD for our latest Podcast

Register your interest in bringing our new movie to your school or community

Subscribe to our YouTube Channel! We add new videos regularly and you'll find over 100 videos covering parenting advice, guidance, podcasts, movie clips and more. Here's our most recent:

As we’re about to celebrate 10 years of Screenagers, we want to hear what’s been most helpful and what you’d like to see next.

Please click here to share your thoughts with us in our community survey. It only takes 5–10 minutes, and everyone who completes it will be entered to win one of five $50 Amazon vouchers.

Hi everyone. I recommend pairing this blog with an encore of one of my favorite podcast episodes, called Kids Using AI Chatbots: The Risks Parents Can’t Ignore, so do check that out.

I talk with many teens and parents and often ask whether they have had conversations about AI together, and most say “no.” I wrote this blog to help spark these important conversations.

The Rithm Project, whose mission is “building human connection in the age of AI,” recently released data from a survey of 2,383, 13 to 24-year-olds.

To better understand why teens do or don't use AI, how their current relationships affect their choice of use, and finally, how their use or non-use impacts their relationships.

As this survey captures data at a single point in time, only correlation can be inferred, not causation. A different design would be needed to tease out causation. (Could your child or students come up with a study design that would achieve causation?)

Then, of the remaining that said “yes, they use it,” the researchers then put them into other clusters:

Clusters 3 and 4 surely also use AI for other things, but given that the study is primarily examining the impact on relationships, these clusters were created accordingly.

The researchers found that the term "AI companion" carries real stigma with teens, who dismissed synthetic relationships as "weird and embarrassing."

Instead, "AI character" resonated much more. It avoids the shame associated with "companion" and better captures the range of ways young people actually engage with personified AI, from chatting with celebrity personas to getting advice from a virtual professional.

Through the survey questions, the researchers divided AI users into three groups: The Bestie, The Gamer, and The Expert Seeker.

The report is chock-full of findings, but I’m only focusing on a few related to Clusters 1 and 4.

As mentioned, they found that 28% of all respondents reported that they use AI rarely or not at all.

Through other questions on the survey the researchers divided this cluster into two groups: “conscious objectors” and “AI non-participants”.

For many months, I have been talking with young people aged 16 to 24, who are very concerned about AI and rarely use it, citing environmental concerns very frequently, in particular all the water used by the data centers.

The hit to the environment is real. I have been surprised that teens cite water issues far more often than energy concerns. I bet that they will start talking more about that with time.

For the AI non-participants: They often cite things like "AI gets things wrong" or "it will make me stupid" as reasons for not using it.

It was found that 15% of respondents who say they use AI, said that they use AI characters. (Cluster 4)

They found that these respondents answered yes to the following statement significantly more often than people in the other clusters; “turn to AI more than people sometimes or more.”

The point here is that if we know kids and teens who are using AI characters, we need to do some calm questioning about things like, are they turning to the characters with personal questions? What other adults besides yourself do they feel they can turn to?

Another concerning finding in this group is that 51% of them feel like they have no one to turn to. Again this is the highest among any cluster and warrants attention.

I've talked often about the idea of a "vulnerable village," and these AI times are calling for it more than ever. Many teens are not going to tell their parents they are using AI characters. If we hear of a teen who is, we should find thoughtful, safe, and respectful ways to address it together.

If you hear from your teen that another teen is using AI characters in ways that concern you, one place to start is talking with other parents about how you might approach the situation together, whether that means reaching out to the teen, their parents, or both.

This is not easy, but our tech revolution warrants a family revolution. We have to stop making family life=private life. We need each other!

Subscribe to our YouTube Channel! We add new videos regularly and you'll find over 100 videos covering parenting advice, guidance, podcasts, movie clips and more. Here's our most recent:

Sign up here to receive the weekly Tech Talk Tuesdays newsletter from Screenagers filmmaker Delaney Ruston MD.

We respect your privacy.

Hi everyone. I recommend pairing this blog with an encore of one of my favorite podcast episodes, called Kids Using AI Chatbots: The Risks Parents Can’t Ignore, so do check that out.

I talk with many teens and parents and often ask whether they have had conversations about AI together, and most say “no.” I wrote this blog to help spark these important conversations.

The Rithm Project, whose mission is “building human connection in the age of AI,” recently released data from a survey of 2,383, 13 to 24-year-olds.

To better understand why teens do or don't use AI, how their current relationships affect their choice of use, and finally, how their use or non-use impacts their relationships.

As this survey captures data at a single point in time, only correlation can be inferred, not causation. A different design would be needed to tease out causation. (Could your child or students come up with a study design that would achieve causation?)

Then, of the remaining that said “yes, they use it,” the researchers then put them into other clusters:

Clusters 3 and 4 surely also use AI for other things, but given that the study is primarily examining the impact on relationships, these clusters were created accordingly.

The researchers found that the term "AI companion" carries real stigma with teens, who dismissed synthetic relationships as "weird and embarrassing."

Instead, "AI character" resonated much more. It avoids the shame associated with "companion" and better captures the range of ways young people actually engage with personified AI, from chatting with celebrity personas to getting advice from a virtual professional.

Through the survey questions, the researchers divided AI users into three groups: The Bestie, The Gamer, and The Expert Seeker.

Snapchat and Instagram both have AI chatbots built in by default, with no way to fully disable them. Meta's own internal documents revealed policy decisions that allowed minors to receive romantic and sexual content from its AI systems. Meanwhile, Snapchat's premium AI features are designed to increase engagement, and Meta is now using teens' AI conversations to target them with personalized ads.

READ MORE >

AI tools like ChatGPT can now complete many homework tasks for students, often in minutes. While these tools may be useful for skilled adults, research suggests they can undermine learning for children by bypassing effort, problem solving, and critical thinking. Homework that involves writing, calculations, or study materials is especially vulnerable to AI use, while memorization and hands-on creative work still require student effort. Clear household rules and ongoing conversations can help protect learning and set expectations around AI use for schoolwork.

READ MORE >

A reader recently sent me a great question: “Should I be worried about my kid using Alexa or Google Home?” It’s a great question, and one I’ve been thinking about more myself lately, especially as these devices become more conversational and, honestly, more human-sounding every day. In today's blog, I dig into the concerns and share practical solutions, including simple replacements for when these devices are used at bedtime.

READ MORE >for more like this, DR. DELANEY RUSTON'S NEW BOOK, PARENTING IN THE SCREEN AGE, IS THE DEFINITIVE GUIDE FOR TODAY’S PARENTS. WITH INSIGHTS ON SCREEN TIME FROM RESEARCHERS, INPUT FROM KIDS & TEENS, THIS BOOK IS PACKED WITH SOLUTIONS FOR HOW TO START AND SUSTAIN PRODUCTIVE FAMILY TALKS ABOUT TECHNOLOGY AND IT’S IMPACT ON OUR MENTAL WELLBEING.